Building Reliable and Accurate Analytics Agents: Part 1

Why AI analytics tools don't always give accurate answers and one way to fix it. Learn how context injection improves accuracy for business queries.

Why AI analytics tools don't always give accurate answers and one way to fix it. Learn how context injection improves accuracy for business queries.

Building Reliable and Accurate Analytics Agents: Part 1

Why AI analytics tools doesn't always give accurate answers and one way to fix it.

Every team building AI-powered analytics hits the same wall: the LLM hallucinates.

Not dramatically wrong answers, just subtly incorrect ones. A column name that doesn't exist. A metric calculated slightly off. A query that works on one dataset but fails on another because of timezone differences.

The LLM is doing what LLMs do—pattern matching from training data. But pattern matching isn't enough when you're querying actual business data. You need precision.

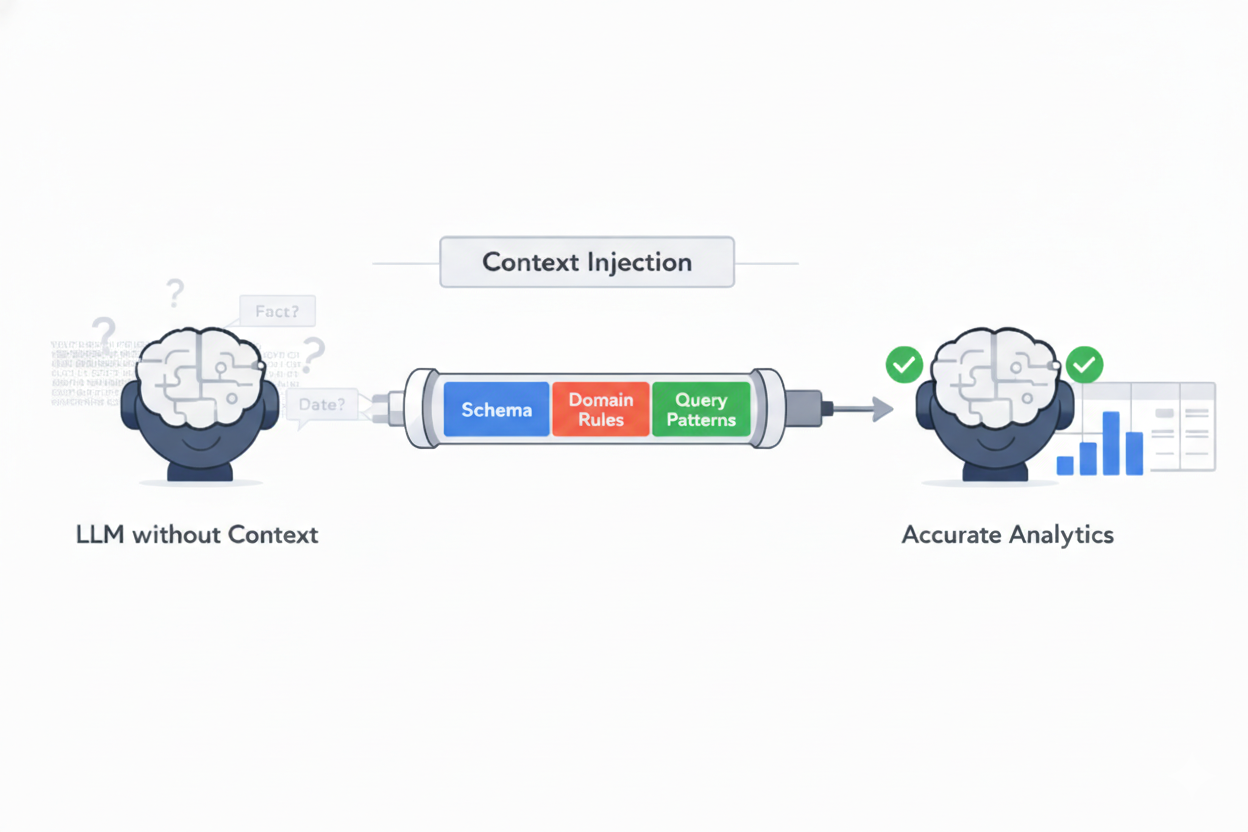

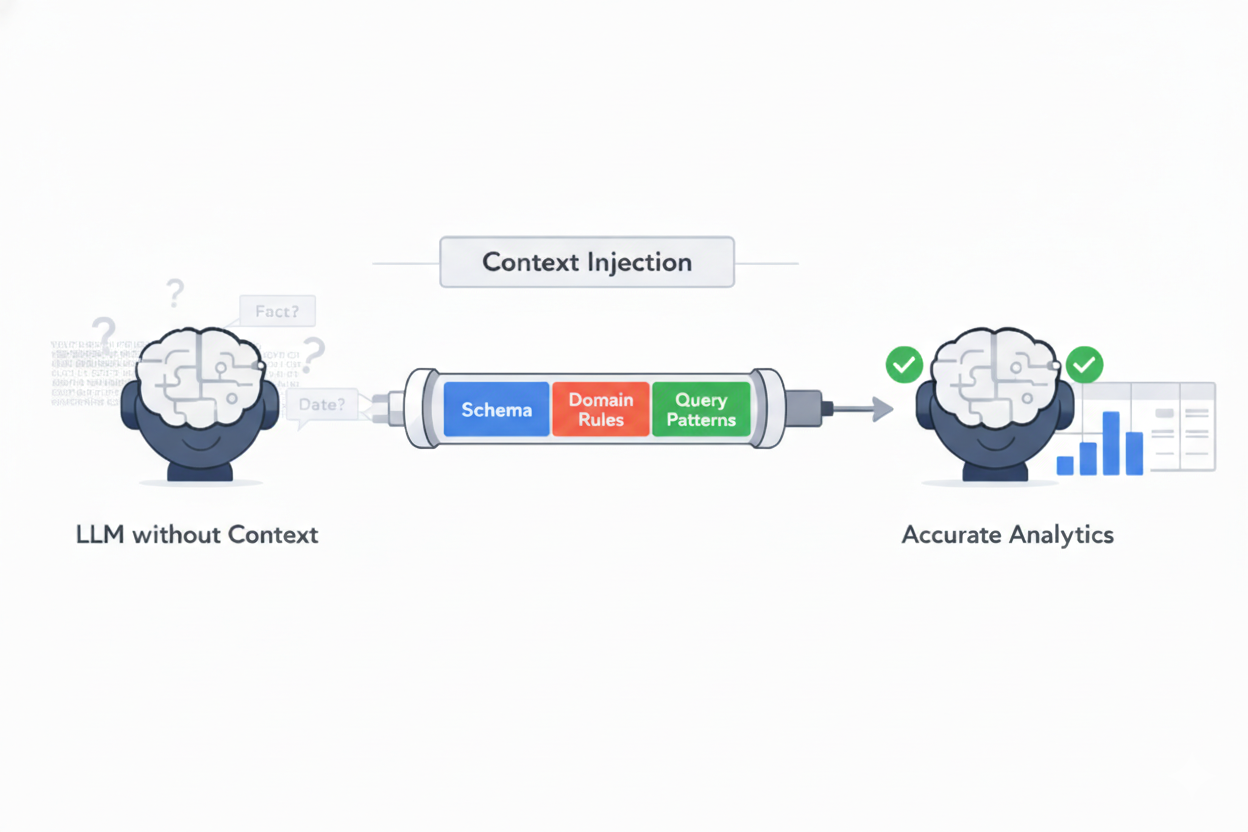

This post covers one method we use to improve accuracy for common business queries: context injection.

Ask a plain question to an agent to write SQL for "show me revenue by product last month":

total_price for net revenueThe errors adds up with wrong columns, wrong date handling, wrong metric definitions and missing business context. For tools that inform business decisions, "mostly right" can lead to wrong business outcomes.

The idea is simple: to make the LLM give accurate responses always

Instead of hoping the model recalls the right schema from training data, inject what it needs to know before it reasons. Dynamically assemble relevant context at query time.

What to inject:

Traditional analytics agent make the LLM do everything from understanding the question, figure out the columns from schema, write the SQL query to handling the edge cases. Each step is a chance for errors to pile on.

Context injection changes this. You front-load the information as context, so the LLM can focus on the one thing it's actually good at: understanding intent and mapping it to the right data.

The key insight: minimize guess work, maximize context.

When an LLM has complete information, it doesn't need to guess. When it doesn't need to guess, it doesn't hallucinate. When it doesn't hallucinate, your analytics are accurate.

Context injection isn't that easy. There are real engineering nuances to be taken care of:

Context window limits You can't inject everything. You need to be selective about what context is relevant for each user query. This requires understanding your data and your users' questions.

Maintenance Domain knowledge needs to stay up to date. Schema changes need to be updated accordingly. This is ongoing work, not a one-time setup.

Diminishing returns More context isn't always better. Past a certain point, you're just confusing the LLM even more. Finding the right balance requires multiple experiments and measurement.

After building analytics agents across different data sources and domains, we have learnt a few lessons:

Context quality beats context quantity. Curated, relevant context outperforms all the context around that question. The model needs signal, not noise.

Domain knowledge is a moat. Any team can give an LLM a schema. The hard part is to encode the business logic, gotchas, and tribal knowledge in a concise manner that make queries actually correct.

Pre-routing beats post-correction. It's much easier to prevent errors with good context than to detect and fix them after the intermediate output has been generated.

Measure accuracy, not vibes. You need evals with verified-correct answers. Without measurement, you're just going by your gut feeling.

Context injection is one method among several for building reliable AI analytics.

The teams getting results from AI analytics aren't using better models. They're investing in the infrastructure around the model — context generation, storage and retrieval in the most optimised manner.

The model is commoditized. The context is the differentiator.

At Gamgee, we build analytics agents that businesses actually trust. See how it works.