Part 1 of the Hypabase Memory Series

When we store knowledge, we make a choice about structure. That choice determines what we can retrieve later. Most AI memory systems choose wrong.

When Academia Realized Pairs Weren't Enough

In 1973, Claude Berge published "Graphs and Hypergraphs," formalizing a structure mathematicians had been circling for decades. The insight was simple: why should an edge connect exactly two nodes?

The motivation came from real problems. Database theorists needed to model functional dependencies — "columns A and B together determine column C" is a three-way relationship, not a pair. VLSI designers needed to model netlists — a wire connects multiple pins, not just two. Biologists needed to model chemical reactions — three reactants producing two products isn't a series of pairs.

The pairwise model that dominated computer science — social networks (person → follows → person), knowledge graphs (entity → relation → entity), property graphs (node → edge → node) — was an abstraction of convenience, not necessity.

But here's the problem: real-world facts aren't pairwise.

The Medical Record That Broke Binary Graphs

Consider what happens when a doctor enters a note:

"Dr. Smith prescribed aspirin to Patient 123 for a headache at Mercy Hospital on Tuesday."

That's one fact. One event. But it involves five entities: Dr. Smith, aspirin, Patient 123, headache, Mercy Hospital (plus a timestamp).

In a binary knowledge graph, you have two options — both bad.

Option 1: Multiple edges, lost coherence

(dr_smith) --prescribed--> (aspirin)

(dr_smith) --treated--> (patient_123)

(patient_123) --diagnosed_with--> (headache)

(prescription) --location--> (mercy_hospital)

(prescription) --date--> (tuesday)

Five edges. The fact that these belong to a single event is now implicit. A query for "what happened at Mercy Hospital on Tuesday" must somehow reassemble these fragments. If another prescription happened the same day, the edges interleave. The structure that made the original fact coherent is gone.

Option 2: Reification — fake nodes to hold things together

(p:Prescription)

(dr_smith) --agent--> (p)

(p) --medication--> (aspirin)

(p) --patient--> (patient_123)

(p) --reason--> (headache)

(p) --location--> (mercy_hospital)

(p) --date--> (tuesday)

Now there's an artificial "Prescription" node that exists only to group edges. Six edges plus a synthetic node. The schema complexity explodes. Every n-ary fact needs its own reification pattern.

This is the reification problem in RDF. It's not a bug, it's a fundamental limitation of pairwise edges.

This is how Mem0, Zep, Neo4j Agent Memory, Cognee, LangMem, and most graph-based memory systems store facts today — as binary triples (entity → relation → entity). It's an improvement over flat vector stores, but it still fragments multi-participant events into disconnected edges that must be reassembled at query time.

Enter the Hyperedge

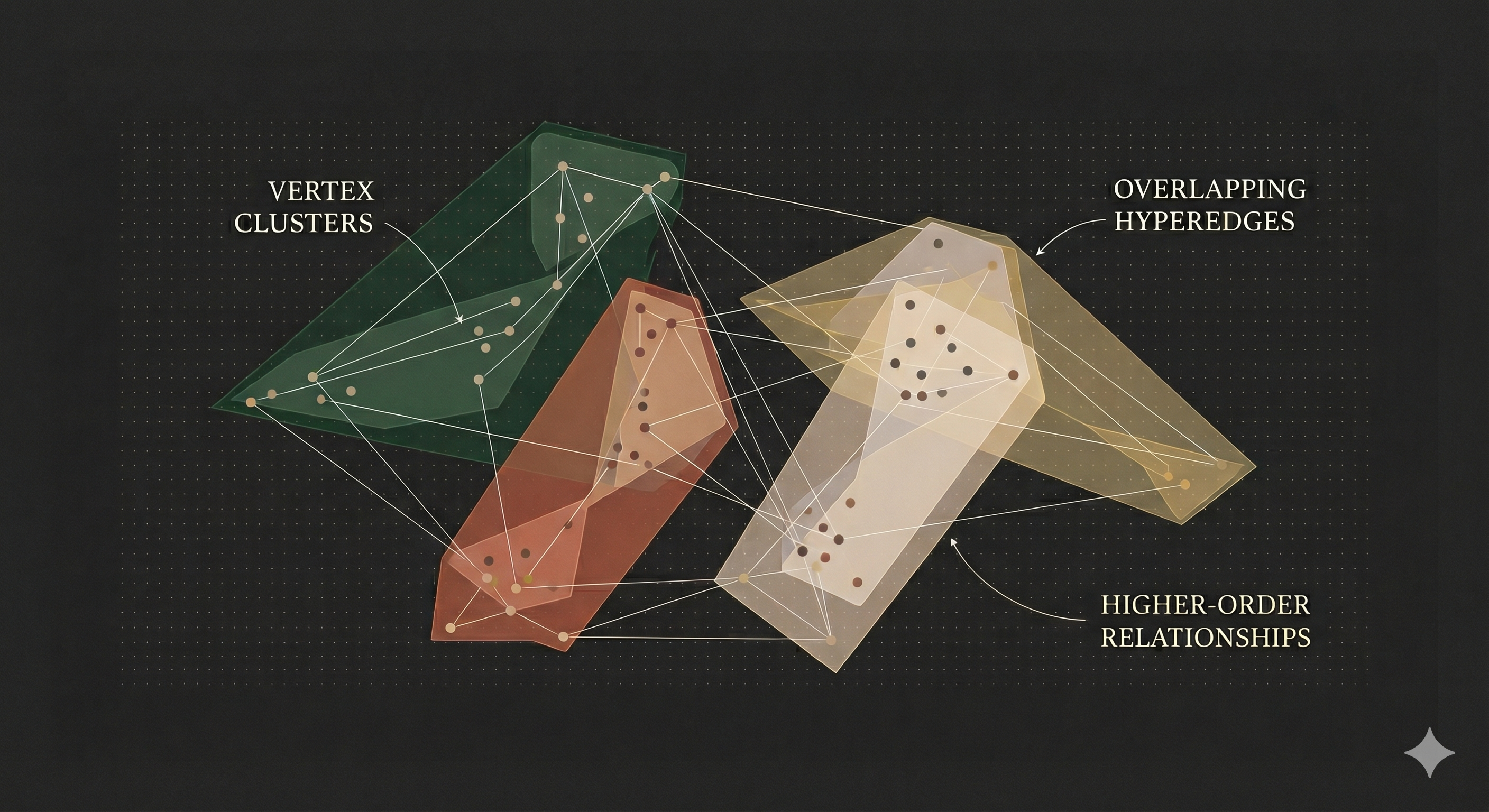

A hypergraph generalizes graphs by allowing edges to connect any number of nodes. The mathematical definition comes from Claude Berge's work in the 1970s, but the intuition is simple: an edge is a set of nodes, not a pair.

The prescription becomes:

hb.edge(

["dr_smith", "patient_123", "aspirin", "headache", "mercy_hospital"],

type="prescription",

properties={"date": "tuesday"}

)

One edge. Five nodes. The structure of the original fact is preserved exactly.

This isn't just cleaner notation. It changes what retrieval can do.

The Structural Ceiling Argument

Here's the thesis: retrieval cannot recover information that representation discards.

When you split a five-entity fact into five binary edges, you lose the grouping. No retrieval algorithm — not embeddings, not reranking, not chain-of-thought, can recover that the edges belong together unless you store that information somewhere.

The representation sets a ceiling. Everything downstream is bounded by it.

Consider a query: "What medications was Patient 123 given for headaches?"

In the binary graph, you need to:

- Find edges where patient_123 is the patient

- Find edges where the reason is headache

- Join these somehow to find the medication

- Hope no other prescriptions interfere

In the hypergraph, you:

- Find edges containing patient_123 and headache

- Read the medication from the same edge

The hyperedge concentrates relevance. All the information needed to answer the query lives in one retrievable unit.

Where Hypergraphs Already Work

This isn't theoretical. Hypergraphs are the natural representation in several domains:

Chemistry: A reaction isn't "A reacts with B." It's "A + B + catalyst → C + D under conditions E." Chemical reaction databases use hyperedges.

Biology: Protein complexes involve multiple proteins functioning together. Gene regulatory networks involve multiple genes and transcription factors. Binary graphs require awkward encodings.

Databases: A functional dependency in relational theory (A, B → C) is inherently ternary. Database normalization theory is built on hypergraphs.

Collaboration: "Alice, Bob, and Carol co-authored a paper" is one fact. Splitting it into three "co-author" edges loses the information that they all collaborated together versus in pairs.

GraphRAG vs HyperGraphRAG

The AI retrieval community is starting to recognize this. Recent papers explicitly address the limitation:

GraphRAG (Microsoft, 2024) builds knowledge graphs from documents for retrieval. It uses binary edges. Their solution to n-ary facts: hierarchical summarization. Create summary nodes that aggregate related edges. It works, but it's a patch on a structural limitation.

HyperGraphRAG (NeurIPS 2025) directly compares binary and hypergraph representations on retrieval tasks across medicine, agriculture, computer science, and law. Their finding: hypergraph representations consistently improve retrieval precision because they preserve the relational structure of facts.

Cog-RAG (AAAI 2026) uses a "dual-hypergraph" architecture: one hypergraph for theme-level retrieval, one for entity-level. Both are hypergraphs, not binary graphs, because the authors found binary representations couldn't capture the multi-entity relationships needed for accurate retrieval. Cog-RAG achieved state-of-the-art results on the UltraDomain and MIRAGE benchmarks, winning 88-99% of head-to-head comparisons against NaiveRAG on comprehensiveness, empowerment, and relevance metrics across domains including medicine (Neurology, Pathology), computer science, and agriculture.

The pattern is clear: when retrieval accuracy matters, researchers reach for hypergraphs.

The OpenCog Precedent

This isn't even new in AI. OpenCog's AtomSpace, designed in the 2000s for artificial general intelligence research, represents knowledge as a "metagraph". It is essentially a hypergraph where edges can connect to other edges.

Ben Goertzel's team made the same observation we're making: knowledge is naturally higher-order. Forcing it into binary relations loses information. AtomSpace was designed for AGI-scale reasoning, but the structural insight applies equally to the more modest problem of agent memory.

What This Means for AI Memory

When an AI agent hears "I bought a bookshelf from a thrift store two weeks ago and am now repainting it," that's one fact with multiple participants: the user, a bookshelf, a thrift store, a time reference, and a current activity.

Store it as binary edges:

(user, bought, bookshelf)

(bookshelf, source, thrift_store)

(purchase, time, two_weeks_ago)

(user, repainting, bookshelf)

The connection between buying and repainting, that it's the same bookshelf, is now implicit. Retrieve "bookshelf" and you get fragments. The agent has to reassemble them, hoping nothing interferes.

Store it as a hyperedge:

(bought :subject user :object bookshelf :source thrift_store

:locus two_weeks_ago :memory_type episodic)

(repainting :subject user :object bookshelf :tense present)

Two edges, each coherent. The bookshelf node appears in both, creating a graph connection. Query for the bookshelf and you get complete facts. No reassembly needed.

The hypergraph doesn't just store the same information more cleanly. It stores more information, the grouping structure that binary graphs discard.

The Representation Thesis

Here's what we believe, and what the rest of this series will explore:

Current AI memory systems obsess over retrieval — better embeddings, smarter reranking, fancier RAG pipelines while ignoring how extracted knowledge is stored. That's backwards.

The structure in which you store knowledge determines the ceiling of what you can retrieve. Retrieval innovations operate below that ceiling. They can't recover what the representation threw away at storage time.

Hypergraphs preserve the natural structure of facts. They're the foundation. The next articles in this series cover what we build on that foundation: semantic roles to label participants, notation to extract structure from language, memory types to model forgetting, and retrieval algorithms that exploit graph structure.

But it all starts here: with edges that can hold more than two things.

Hypabase Memory: Hypergraphs as Foundation

Hypabase Memory is built on a hypergraph engine. Every memory becomes a hyperedge connecting all participants in a single unit — preserving the complete structure of facts and making retrieval return coherent events rather than fragments to reassemble.

Next in series: What Ancient Sanskrit Solves in AI Memory