What Ancient Sanskrit Solves in AI Memory

Pāṇini's 2,500-year-old kāraka roles provide a minimal, language-universal vocabulary for labeling how participants relate to actions — turning vague entity lookups into structured semantic search.

Pāṇini's 2,500-year-old kāraka roles provide a minimal, language-universal vocabulary for labeling how participants relate to actions — turning vague entity lookups into structured semantic search.

Stay updated

Get notified when we publish new research on AI memory and agents.

Part 2 of the Hypabase Memory Series

We established that hypergraphs preserve the natural structure of facts — one edge can connect five entities instead of fragmenting them into pairs. But connection isn't enough. We need to know how each entity participates.

"Alice gave Bob a book" and "Bob gave Alice a book" contain the same three entities. The difference is who did what to whom. The solution? A 2,500-year-old grammatical framework from ancient India that modern NLP has been slowly rediscovering.

Consider a hyperedge connecting: [Alice, Python, Java]

What does this mean? Without labels, we can't know:

The entities are connected. The semantics are lost.

Binary knowledge graphs solve this with typed edges: (Alice) --prefers--> (Python). But we've already established why binary edges fail for n-ary facts. We need role labels inside hyperedges.

In the 5th century BCE, the Sanskrit grammarian Pāṇini wrote the Aṣṭādhyāyī — eight chapters of grammatical rules that formalized the Sanskrit language. It's considered the first formal grammar in human history and influenced everything from Chomsky's generative grammar to modern dependency parsing.

Pāṇini's key insight for our purposes: semantic roles are independent of word order and surface syntax.

In English, "Alice gave Bob a book" and "The book was given to Bob by Alice" mean the same thing despite different word orders. Pāṇini formalized this with kāraka — semantic roles that describe how participants relate to an action regardless of how the sentence is structured.

The word kāraka comes from Sanskrit कारक, meaning "that which brings about" or "that which is instrumental in the action."

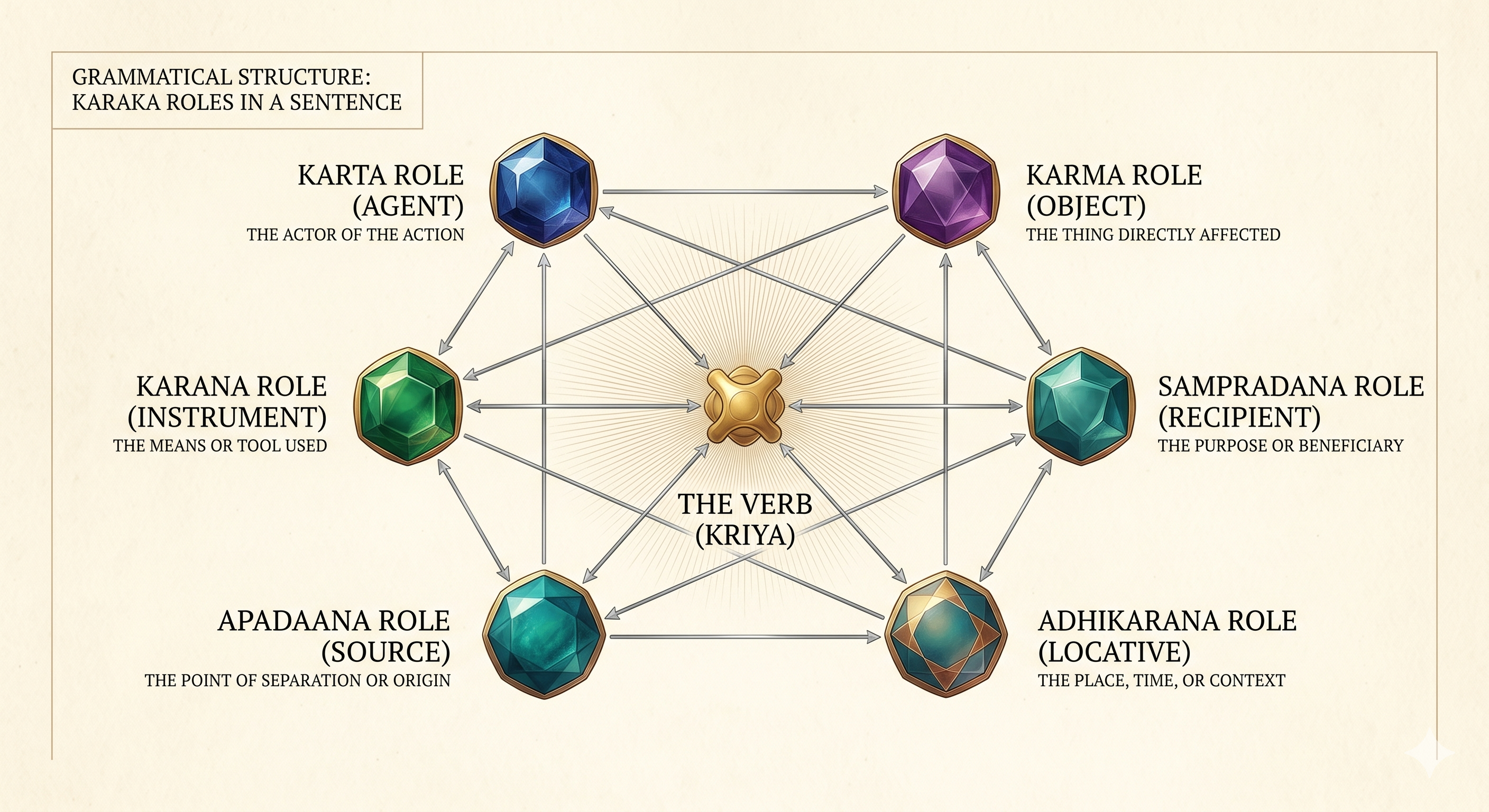

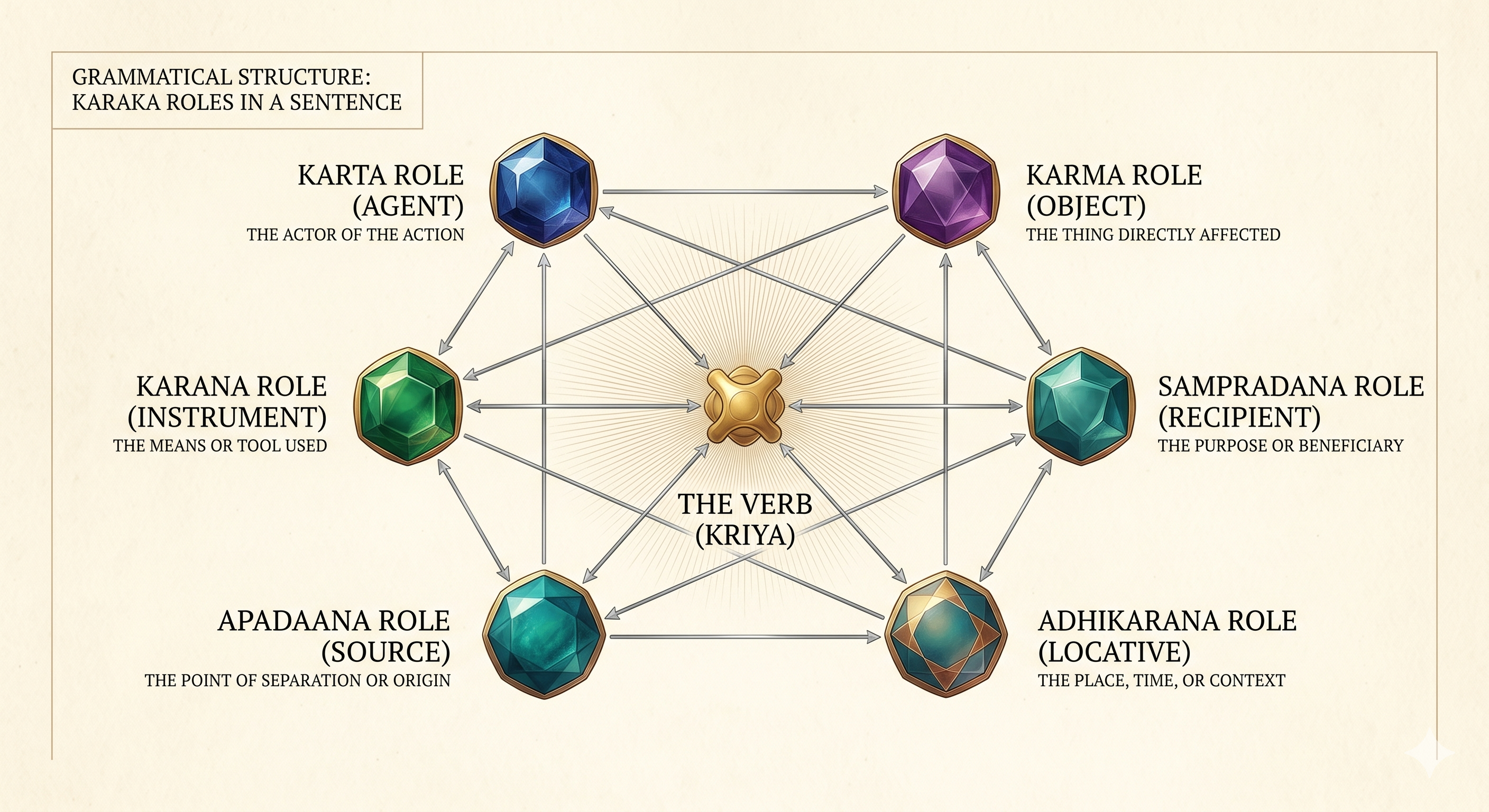

Pāṇini defined six primary kāraka roles. We use eight, adding two for attribute-value relationships common in factual memory:

| Role | Sanskrit | Meaning | Question Answered |

|---|---|---|---|

| subject | kartā (कर्ता) | The agent, doer | WHO does it? |

| object | karma (कर्म) | The patient, thing affected | WHAT is affected? |

| instrument | karaṇa (करण) | The means or tool | HOW / WITH WHAT? |

| recipient | sampradāna (सम्प्रदान) | The beneficiary, receiver | TO WHOM? |

| source | apādāna (अपादान) | The origin, starting point | FROM WHERE? |

| locus | adhikaraṇa (अधिकरण) | Location in space or time | WHERE / WHEN? |

| attribute | viśeṣaṇa (विशेषण) | A property being described | WHICH PROPERTY? |

| value | māna (मान) | The value of that property | WHAT VALUE? |

Now our hyperedge has structure:

(prefers

:subject Alice

:object Python

:source Java)

This reads: Alice (subject/agent) prefers Python (object/patient) over Java (source/origin of comparison).

Three reasons:

1. Language-universal coverage

English prepositions are a mess. "I cut the bread with a knife" (instrument) vs "I went with Alice" (accompaniment) vs "I'm happy with the result" (cause) — same preposition, different semantics.

Kāraka roles are semantic, not syntactic. They transfer across languages because they describe meaning, not surface form. "Alice gave Bob a book" in English, Japanese, Arabic, or Swahili has the same kāraka structure: agent, recipient, patient.

2. Minimal sufficient set

PropBank has 35+ argument roles. FrameNet has hundreds of frame-specific roles. AMR has 100+ relations.

Kāraka has 6-8 roles that cover the vast majority of semantic relationships. It's not that finer distinctions don't exist — it's that finer distinctions rarely matter for memory retrieval. "Who did Alice give the book to?" doesn't care whether the recipient was a benefactive-dative or a goal-dative.

Fewer roles mean simpler extraction, more consistent storage, and more predictable retrieval.

3. Questions map to roles

Each kāraka role corresponds to a natural question:

When a user asks "Who did Alice meet?", the system knows to look for edges where Alice is the subject and return the recipients or objects. The mapping is direct.

| System | # of Roles | Designed For | Limitation for Memory |

|---|---|---|---|

| Kāraka | 8 | Universal grammar | — |

| PropBank | 35+ | Verb-specific annotation | Too fine-grained, varies by verb |

| FrameNet | 100s | Frame semantics | Requires frame identification |

| AMR | 100+ | Sentence meaning | English-centric, complex |

| Schema.org | 1000s | Web markup | Domain-specific explosion |

Kāraka sits at the sweet spot: enough expressiveness to distinguish "Alice gave Bob" from "Bob gave Alice," not so much that extraction becomes unreliable or storage becomes inconsistent.

Not all roles are equally important for finding relevant memories. In ACT-R cognitive architecture, this is called the "fan effect" — cues connected to many items provide less activation per item than cues connected to few.

We model this with role weights on a relative scale:

| Role | Weight | Rationale |

|---|---|---|

| subject | highest | Agents are highly diagnostic — "who did it" is usually the key question |

| object | high | Patients matter almost as much — "what was affected" |

| recipient | medium-high | Narrows search to specific targets |

| instrument | medium | How something happened — useful but contextual |

| source | medium-low | Origins provide context |

| attribute, value | lower | Descriptive properties, often shared across memories |

| locus | lowest | Locations and times are frequently shared — "at the office" matches many memories |

A match on subject contributes roughly 2.5× more to relevance than a match on locus. This reflects intuition: "memories involving Alice" (subject match) is more specific than "memories at the office" (locus match).

These weights feed into spreading activation during retrieval — more on that in the retrieval article.

Here's how real memories get structured:

"Bob sent the report to Carol using email yesterday"

(sent

:subject Bob

:object "the report"

:recipient Carol

:instrument email

:locus yesterday

:memory_type episodic)

"Alice believes Bob prefers tea" (nested structure)

(believes

:subject Alice

:object (prefers :subject Bob :object tea))

The nested example shows compositionality — a belief about a preference. The outer structure (Alice believes X) uses kāraka roles. The inner structure (Bob prefers tea) also uses kāraka roles.

You might wonder: why not let the verb carry the semantic weight? "Alice gave Bob a book" — surely "gave" tells us the roles?

Two problems:

1. Verbs are ambiguous

"Alice ran the meeting" (subject = agent) "The meeting ran late" (subject = patient/theme)

Same verb, different role assignments. The verb alone doesn't determine who does what.

2. Implicit roles

"The door opened" — who opened it? The subject is the patient (the door), but there's an implicit agent. Without explicit role labels, this is indistinguishable from "Alice opened" where the subject is the agent.

Kāraka roles make the semantics explicit. The verb provides the action type; the roles provide the participant structure.

Labeling roles correctly requires understanding the sentence. This is non-trivial.

Consider: "Alice was given a promotion by the board."

Passive voice flips the surface subject and deep subject:

We use LLMs for extraction precisely because they handle this. The LLM reads the sentence, understands the semantics, and outputs the correct role assignments:

(gave

:subject "the board"

:object "a promotion"

:recipient Alice)

The representation is language-agnostic even though the extraction isn't.

The payoff comes at query time.

Query: "What has Alice given to people?"

The system interprets this as:

Without role labels, this query would require pattern matching across edge types, hoping the schema consistently puts givers first. With kāraka, it's a structured filter.

Query: "What tools does the team use for deployment?"

Interpretation:

The question "what tools" maps to the instrument role. The system doesn't need to understand English prepositions — it searches structured roles.

Kāraka roles only make sense with hyperedges.

In a binary graph, each edge has exactly two nodes — there's no room for labeled roles beyond "source" and "target" of the directed edge. You could add role information as edge properties, but then you're encoding n-ary structure in a binary container.

A hyperedge naturally accommodates multiple participants with distinct roles:

Hyperedge: prescription_001

Type: prescribed

Participants:

- dr_smith (role: subject)

- patient_123 (role: recipient)

- aspirin (role: object)

- headache (role: source) // reason for prescription

- mercy_hospital (role: locus)

The hyperedge holds the fact. The kāraka roles label the participants. Together, they preserve both the structure and the semantics of the original information.

Hypabase Memory uses kāraka roles to label every participant in a hyperedge. This enables precise queries ("find where Alice is the subject") and role-weighted retrieval (subject matches rank higher than locus matches) — turning vague entity lookups into structured semantic search.

Previous: Hypergraph Based Memory: Why Pairwise Graph Memory Isn't Enough for AI Agents

Next in series: The Embedding Problem: Extracting Structure from Text