The Embedding Problem: Extracting Structure from Text

Text embeddings conflate structure — 'Alice gave Bob a book' and 'Bob gave Alice a book' have nearly identical embeddings. Structured extraction with Kāraka roles recovers what embeddings lose.

Text embeddings conflate structure — 'Alice gave Bob a book' and 'Bob gave Alice a book' have nearly identical embeddings. Structured extraction with Kāraka roles recovers what embeddings lose.

Stay updated

Get notified when we publish new research on AI memory and agents.

Part 3 of the Hypabase Memory Series

"Alice gave Bob a book" and "Bob gave Alice a book" have nearly identical embeddings — same words, same length, similar meaning. But the facts are opposite. Who gave, who received — this structure is invisible to vector similarity.

This is the embedding problem. And it's why most RAG-based memory systems fail at precise recall.

The user says: "I bought a hybrid bike from REI last month and I've been using it for my commute."

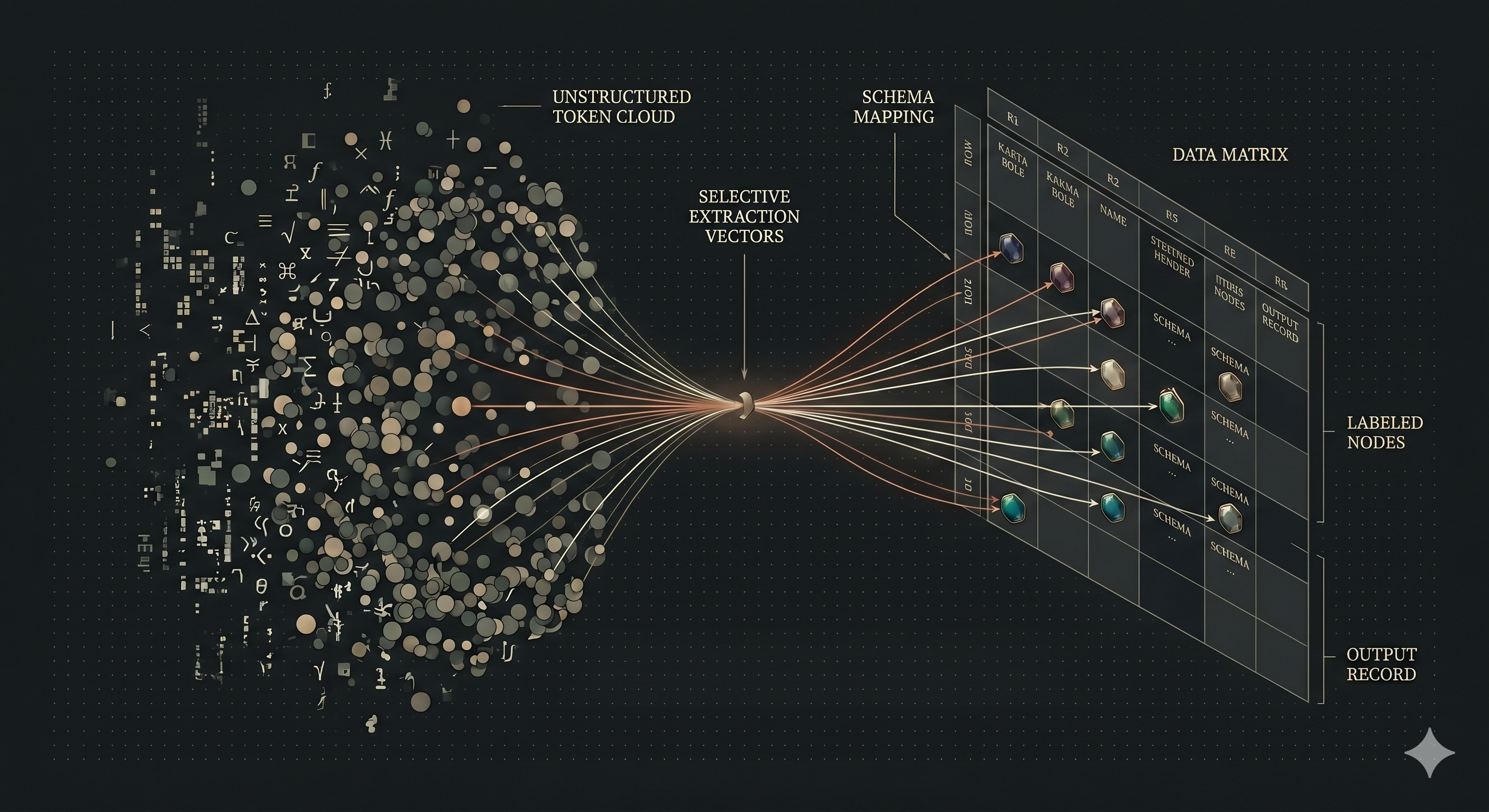

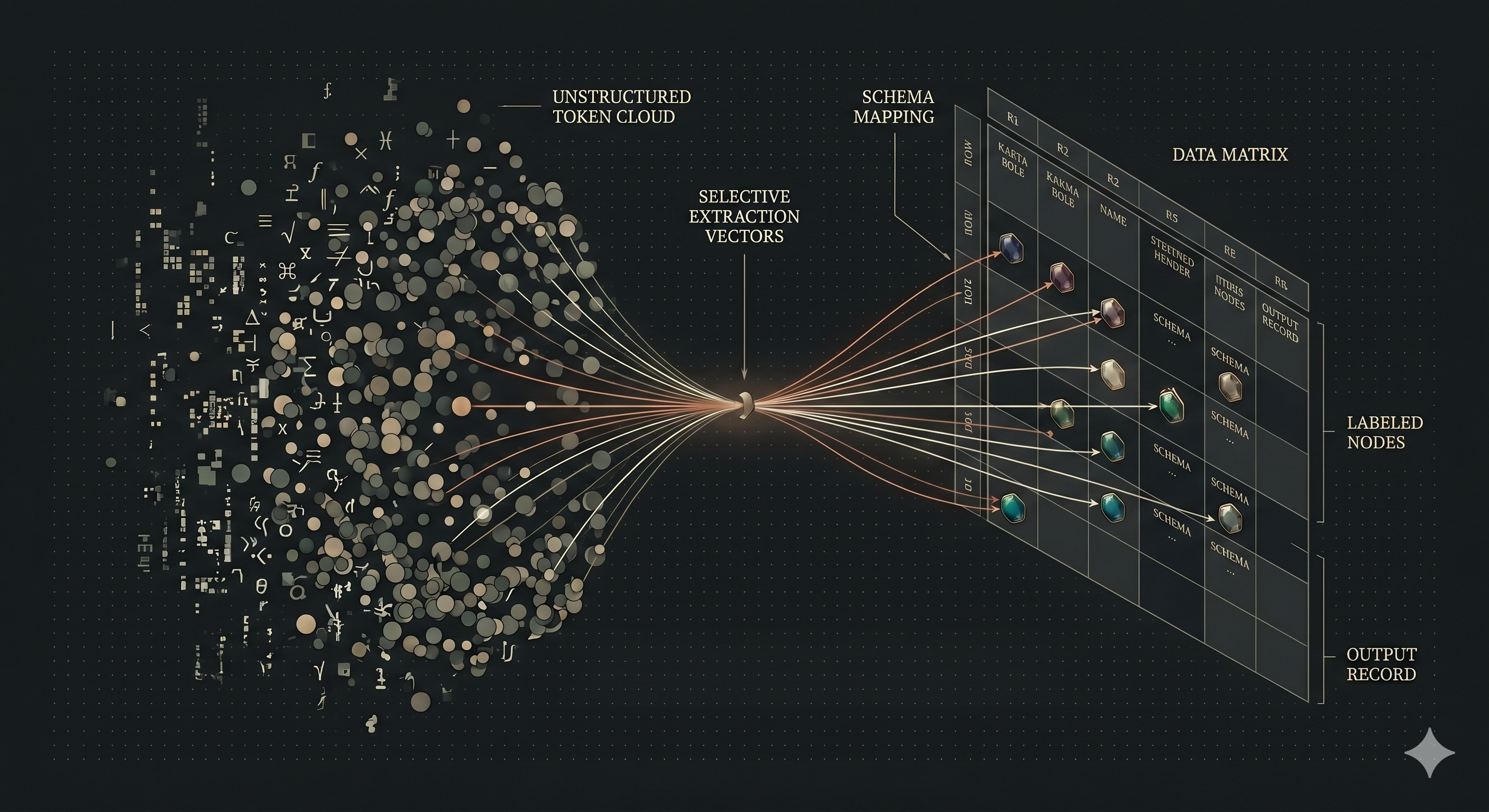

The memory system needs to store this as structured hyperedges — not as a text chunk, not as disconnected triples, but as coherent facts with labeled participants. We have hypergraphs to hold n-ary facts and Kāraka roles to label participants. Now we need to extract these structures from natural language.

Most AI memory systems skip this step. They store conversation chunks as text, embed them, and retrieve by similarity. Simple, but limited.

The problem: text embeddings conflate structure. "Alice gave Bob a book" and "Bob gave Alice a book" have nearly identical embeddings — same words, same length, similar meaning. But the facts are different. Who gave, who received, what was given — this structure is invisible to embeddings.

Structured extraction recovers what embeddings lose. Instead of storing text, we extract the underlying fact:

(gave :subject Alice :object book :recipient Bob)

Now "who gave" has an answer: Alice. "Who received" has an answer: Bob. The structure is explicit, queryable, and preserved.

Our target representation is a hyperedge with Kāraka-labeled participants. For the bike example:

Input: "I bought a hybrid bike from REI last month"

Output:

(bought

:subject user

:object "hybrid bike"

:source REI

:locus "last month"

:memory_type episodic)

This is one hyperedge connecting four entities: user, hybrid bike, REI, and a time reference. Each entity has a role label. The edge has a type (bought) and metadata (episodic memory).

The notation we use is PENMAN — an S-expression format from computational linguistics. But the notation is incidental. What matters is the structure: verb + participants with roles + metadata.

The 8 Kāraka roles provide a fixed vocabulary for extraction:

| When you see... | Extract as... |

|---|---|

| Who did the action | :subject |

| What was affected | :object |

| Who/what benefited | :recipient |

| What tool/method was used | :instrument |

| Where something came from | :source |

| Where/when it happened | :locus |

| What property is described | :attribute |

| What value it has | :value |

This vocabulary is closed. Every participant in every fact gets one of these eight labels. No schema drift, no ad-hoc invention.

Compare to free-form extraction where each fact might use different labels: "buyer", "purchaser", "customer" for the same semantic role. Closed vocabulary means consistent storage and predictable retrieval.

Multi-participant event:

"Bob sent the quarterly report to Alice using the shared drive yesterday."

(sent

:subject Bob

:object "quarterly report"

:recipient Alice

:instrument "shared drive"

:locus yesterday

:memory_type episodic)

One hyperedge, five participants, each with a distinct role. The complete event is captured as a single retrievable unit.

Nested belief:

"Alice thinks Bob prefers tea."

(thinks

:subject Alice

:object (prefers :subject Bob :object tea))

The :object of "thinks" is itself a structured fact. Nesting preserves attribution — we know it's Alice's belief about Bob's preference, not a direct observation.

With structured extraction, queries map to role filters:

Without structure, these queries rely on embedding similarity — hoping "prefer" and "like" are close enough, hoping "REI" appears in relevant text. Structure makes retrieval deterministic.

When the same entity appears in multiple hyperedges, it becomes a connection point:

(bought :subject user :object "hybrid bike" :source REI ...)

(uses :subject user :object "hybrid bike" :locus commute ...)

(repaired :subject user :object "hybrid bike" :instrument "new brakes" ...)

Three hyperedges, connected through "hybrid bike" and "user". Query for the bike and you get the complete history: purchase, usage, maintenance. The graph structure emerges from extraction — no manual linking required.

The bike purchase is one fact with four participants. In a triple-based system:

(user, bought, bike)

(bike, from, REI)

(purchase, when, last_month)

Three fragments. The connection between "bought" and "REI" requires inference. In hypergraph extraction, it's one edge — the structure matches the original fact.

Extraction converts natural language into structured hyperedges:

The extractor itself can be an LLM or a dedicated NLP pipeline. LLMs offer flexibility with informal language, implicit references, and domain-specific terminology — they handle the linguistic complexity (passive voice, coreference, implied arguments) that rule-based systems struggle with. Trained semantic parsers offer higher throughput and lower cost for standard language patterns.

The key architectural decision: extraction happens outside the memory system. Hypabase Memory does graph work — store, traverse, score, return. It accepts structured atoms and persists them. The calling agent or pipeline handles the natural language parsing, choosing whatever extraction method fits the use case.

Consider: "Alice gave Bob a book at the library."

As triples:

(Alice, gave, book)

(giving_event, recipient, Bob)

(giving_event, location, library)

Three fragments plus a synthetic node. The coherence is lost. "Who did Alice give books to?" requires joining across triples. Different extractors might produce (book, given_by, Alice) — no enforced role semantics.

As text chunks:

The sentence embeds as a vector. But "Alice gave Bob a book" and "Bob gave Alice a book" have nearly identical embeddings — the role reversal is invisible. You can't query "who was the recipient" — you can only ask "what chunks are similar to 'gave book'".

As a hyperedge:

(gave :subject Alice :object book :recipient Bob :locus library)

One edge, four labeled participants. Query by any role. No joins, no ambiguity, no lost structure.

Structured extraction has a cost: extraction quality bounds system quality.

If the LLM extracts "bought" as "acquired" or drops the time reference, that information is lost. No retrieval algorithm can recover what extraction missed.

This is the representation thesis from Blog 1: the structure you store determines the ceiling of what you can retrieve. Structured extraction raises the ceiling — but it also means extraction errors are consequential.

In practice, modern LLMs extract reliably when prompted well. The precision gain from structure outweighs the occasional extraction error. And extraction errors are debuggable — you can inspect the hypergraph and see exactly what was stored.

Hypabase Memory extracts structured hyperedges using Kāraka roles. The remember() API accepts PENMAN-formatted atoms, validates role names against the 8-role vocabulary, and stores each as a hyperedge with labeled incidences. The same structure that enables precise storage enables precise retrieval.

Previous: What Ancient Sanskrit Solves in AI Memory

Next in series: The Forgetting Problem: How Neuroscience Solves AI Memory