Part 4 of the Hypabase Memory Series

Not all memories are equal. But most AI memory systems treat them that way.

"I had coffee with Sarah yesterday" should fade. "Sarah is a data scientist" should persist. "To deploy, run ./scripts/deploy.sh" should be nearly permanent.

This is the forgetting problem: if we treat all memories the same, we get one of two failure modes — everything persists (noise overwhelms signal) or everything decays equally (important facts disappear with trivia).

Neuroscience solved this decades ago. We can borrow the solution.

Tulving's Taxonomy

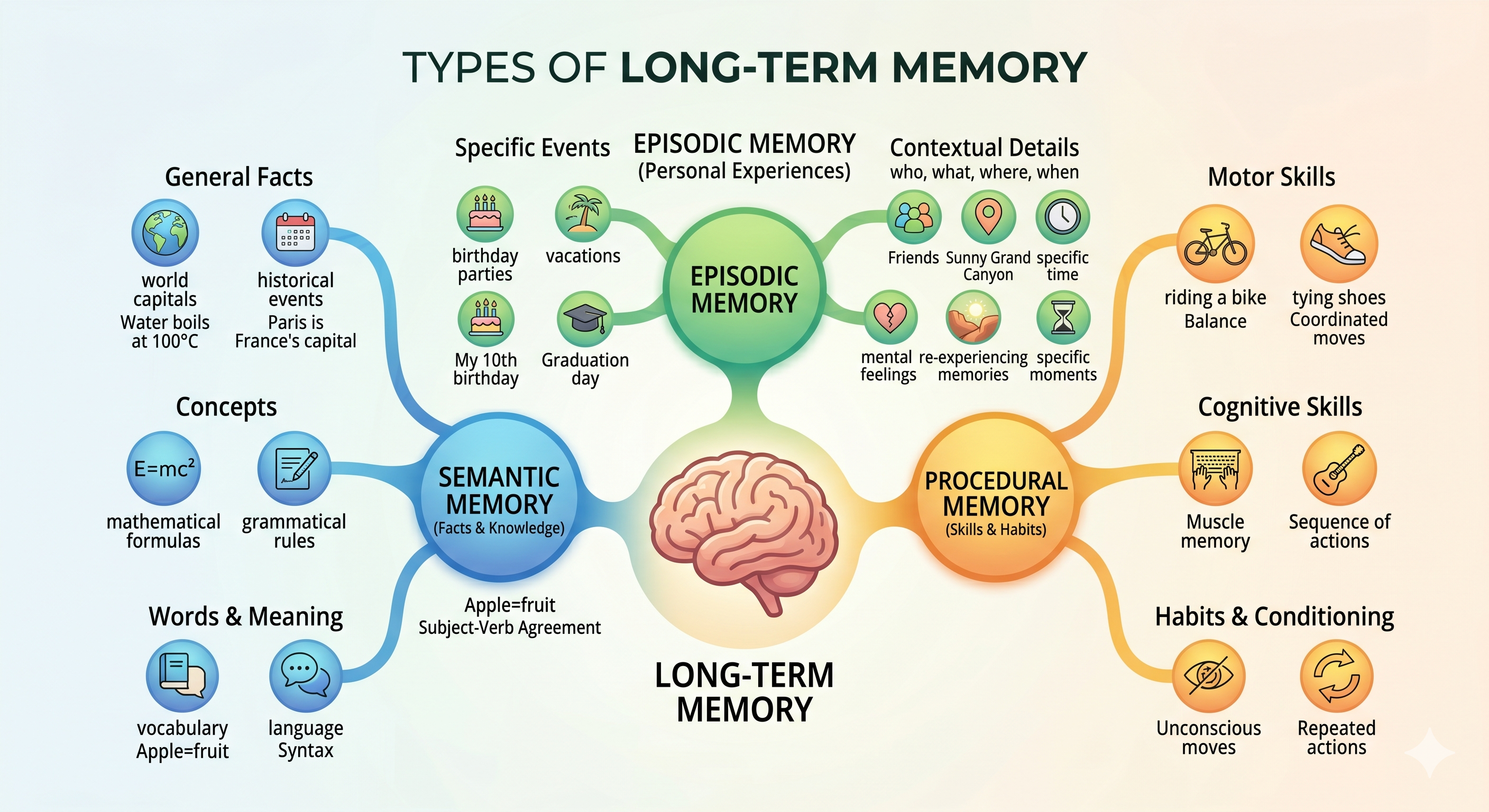

In 1972, psychologist Endel Tulving proposed a distinction that became foundational: episodic memory vs semantic memory. His paper "Episodic and Semantic Memory" has been cited over 5,000 times and remains central to memory research.

Episodic memory stores events — specific occurrences anchored in time and place. "I ate sushi at that restaurant on Tuesday." These memories are autobiographical. They have a "when" and often a "where."

Semantic memory stores facts — general knowledge abstracted from specific experiences. "Sushi is a Japanese dish made with rice and fish." These memories have no timestamp. They're true (or believed true) independent of when you learned them.

Later research added a third category:

Procedural memory stores skills — how to do things. "How to ride a bike." "How to type." These memories are often implicit. You can execute the skill without consciously recalling the steps.

Different Systems, Different Decay

These aren't just conceptual categories. They correspond to different brain systems with different properties.

Episodic memory is primarily hippocampal. The hippocampus binds together the what, where, and when of experiences. These memories are vivid when fresh, but decay relatively quickly. Most of what you did last month is already fuzzy.

Semantic memory is primarily neocortical. Facts get consolidated from hippocampal traces into distributed cortical representations. This process is slow but produces durable memories. You still know that Paris is the capital of France, even though you can't remember when you learned it.

Procedural memory involves the basal ganglia and cerebellum. Skills become automated through repetition. Once consolidated, they're remarkably resistant to forgetting. You can ride a bike after decades of not riding.

The decay rates differ by orders of magnitude:

- Episodic: Days to weeks without rehearsal

- Semantic: Months to years

- Procedural: Years to lifetime

Memory Types for AI Agents

An AI agent accumulates information from conversations. Some of it is eventful:

"I met with the client today. They want the deliverable by Friday."

This is episodic. It happened at a specific time. Next month, the specifics matter less — was it that client meeting or another one?

Some information is factual:

"Our API rate limit is 1000 requests per minute."

This is semantic. It's a fact about the system. It doesn't decay just because time passes. It changes when the rate limit changes, not when the memory ages.

Some information is procedural:

"When the build fails, check the logs at /var/log/build.log first."

This is a procedure. A debugging skill. It should persist essentially forever, or until the system changes.

Decay Rates in Hypabase

We model this with per-type decay rates:

MEMORY_DECAY_RATES = {

"episodic": 0.15, # Fast decay — events fade

"semantic": 0.02, # Slow decay — facts persist

"procedural": 0.01, # Slowest — skills are durable

}

These are exponential decay rates per day. After one day:

- Episodic memory: 86% strength (e^-0.15)

- Semantic memory: 98% strength (e^-0.02)

- Procedural memory: 99% strength (e^-0.01)

After one month (30 days):

- Episodic: 1% strength — nearly gone

- Semantic: 55% strength — still strong

- Procedural: 74% strength — very strong

The numbers are tunable, but the principle matters: different types decay differently.

Classifying Memories

How do we know which type a memory is?

Explicit annotation. The extraction prompt asks the LLM to classify:

(met :subject user :object client :locus today :memory_type episodic)

(is :subject "API rate limit" :value "1000 req/min" :memory_type semantic)

(check :subject user :object logs :locus "/var/log/build.log" :memory_type procedural)

The LLM sees the context and makes a judgment. Event-like statements with time references get episodic. Definitional statements get semantic. How-to instructions get procedural.

Heuristic fallback. If the LLM doesn't classify, we can infer:

- Has a tense marker (yesterday, last week) → episodic

- States "X is Y" or "X has property Y" → semantic

- Describes steps or conditions → procedural

Default: episodic. When in doubt, treat it as an event. Episodic is the safest default because it decays. A misclassified semantic fact will fade and get re-learned. A misclassified episodic event treated as semantic will persist as noise.

The Consolidation Process

In human memory, episodic traces consolidate into semantic knowledge over time. You have many episodic memories of eating apples. Eventually, you have the semantic knowledge "I like apples" without remembering specific apple-eating events.

This is a form of compression. Many similar episodes become one abstracted fact.

Hypabase's consolidate() operation does something similar:

- Find episodic edges involving the same entities

- Detect patterns (same action type, same participants)

- Optionally create a semantic summary edge

This isn't automatic. The agent (or a background process) triggers consolidation. But the memory type informs the process — episodic memories are candidates for consolidation; semantic memories are often the result.

Memory Type and Retrieval

Memory type affects not just decay but retrieval ranking.

When a user asks a factual question ("What's our API rate limit?"), semantic memories should rank higher. The user wants the current fact, not the episode where they learned it.

When a user asks about events ("What happened in the client meeting?"), episodic memories should rank higher. The specific details matter.

Memory type becomes a filter:

# Factual query

memories = recall(query="API rate limit", memory_type="semantic")

# Event query

memories = recall(query="client meeting", memory_type="episodic")

The type annotation enables targeted retrieval.

ACT-R Connection

This isn't ad-hoc design. It connects to ACT-R, a cognitive architecture from John Anderson's lab at CMU that models human memory and learning.

ACT-R's declarative memory module has:

- Base-level activation: Memories gain strength from recency and frequency

- Spreading activation: Cues activate related memories

- Activation threshold: Memories below threshold aren't retrieved

Our memory strength formula (next article) directly implements ACT-R's base-level learning equation. Memory types modulate the decay parameter in that equation.

ACT-R has been validated against decades of psychological experiments. When we adopt its principles, we're not guessing. We're implementing a tested model of human memory.

Edge Cases

Episodic event that updates semantic fact:

"Sarah got promoted to Senior Engineer yesterday."

This is episodic (it happened yesterday) but it updates a semantic fact (Sarah's title). We store both:

(promoted :subject Sarah :locus yesterday :memory_type episodic)

(is :subject Sarah :attribute title :value "Senior Engineer" :memory_type semantic)

The episodic memory will fade. The semantic memory will persist. The fact that there was a promotion event is less important than the current state.

Procedure learned from an event:

"When the server crashed last time, we fixed it by restarting the cache service."

The event (server crashed last time) is episodic. The procedure (restart cache service to fix crashes) is procedural. Both get stored:

(crashed :subject server :locus "last time" :memory_type episodic)

(restart :subject user :object "cache service" :condition "server crash" :memory_type procedural)

The specific crash fades. The fix persists.

Why This Matters for Agents

Without memory types, an agent faces a dilemma:

Option 1: Aggressive decay. All memories fade quickly. The agent forgets important facts. Users have to repeat themselves. "I already told you I prefer Python!"

Option 2: No decay. All memories persist. The context fills with noise. Old events crowd out current facts. Retrieval quality degrades.

Option 3: Uniform decay. All memories decay at the same rate. Facts and events are treated equally. Some important things fade too fast; some unimportant things persist too long.

Memory types resolve the dilemma. Events fade. Facts persist. Skills endure. The agent's memory matches intuition about what should be remembered.

Ebbinghaus discovered in 1885 that forgetting follows an exponential curve. Memories are strong when fresh and decay over time. The rate of decay depends on the memory's nature.

We implement this exactly:

- Exponential decay: strength = e^(-rate × age)

- Rate varies by type: episodic > semantic > procedural

- Other factors (access frequency, importance) modulate strength

The next article details the full strength formula. Memory type is one input — an important one that captures 2,500 years of observations about how different kinds of knowledge behave.

Memory types tell us what kind of thing we're remembering. Memory strength tells us how well we remember it. Both determine what gets retrieved.

Hypabase Memory: Type-Aware Decay

Hypabase Memory stores memory type as a first-class property on every hyperedge. The forget() operation uses type-specific decay rates — episodic memories expire faster than semantic facts, which expire faster than procedural knowledge. The result: agents that naturally remember what matters and forget what doesn't.

Previous: The Embedding Problem: Extracting Structure from Text

Next in series: The Math Behind What Your Agent Remembers or Forgets