Part 6 of the Hypabase Memory Series

You've stored memories as hyperedges. You've labeled participants with kāraka roles. You've assigned memory types and computed strength. Now someone asks a question.

How do you find the right memories?

The obvious answer: embed the query, find similar embeddings. This is what every RAG system does.

It's not enough. And neuroscience figured out why decades ago.

The Limits of Embedding Search

Consider an agent that has stored two memories from separate conversations:

Memory 1: "Alice spent $400 on bike maintenance last month — new brakes, tune-up, and tire replacement."

Memory 2: "Alice drove 800 miles on her road trip through the mountains. The bike rack held up well."

Now the user asks: "What are Alice's bike-related expenses?"

Embedding search will strongly match Memory 1 — it mentions expenses explicitly. Memory 2 talks about a road trip, not expenses. The embeddings are distant.

But Memory 2 is relevant. Road trips have costs. The bike rack implies equipment purchases. If Alice's finances are the topic, both memories matter.

The memories share an entity: Alice's bike. In the graph, they're connected through the "bike" node. But in embedding space, "maintenance costs" and "mountain road trip" are far apart.

Embedding search alone misses the structural connection.

Complementary Learning Systems

In 1995, McClelland, McNaughton, and O'Reilly published "Why There Are Complementary Learning Systems in the Hippocampus and Neocortex" — a paper that became foundational in memory research.

Their thesis: the brain uses two complementary systems for memory.

The hippocampus provides rapid encoding of specific episodes. It binds together the what, where, and when of experiences. It's an index — pointing to where things are stored.

The neocortex provides slow learning of statistical regularities. It builds distributed representations that capture semantic similarity. It's a pattern-completion engine.

Retrieval involves both. The hippocampus finds specific memories through sparse indices. The neocortex finds related memories through pattern matching. The systems complement each other because they have different failure modes.

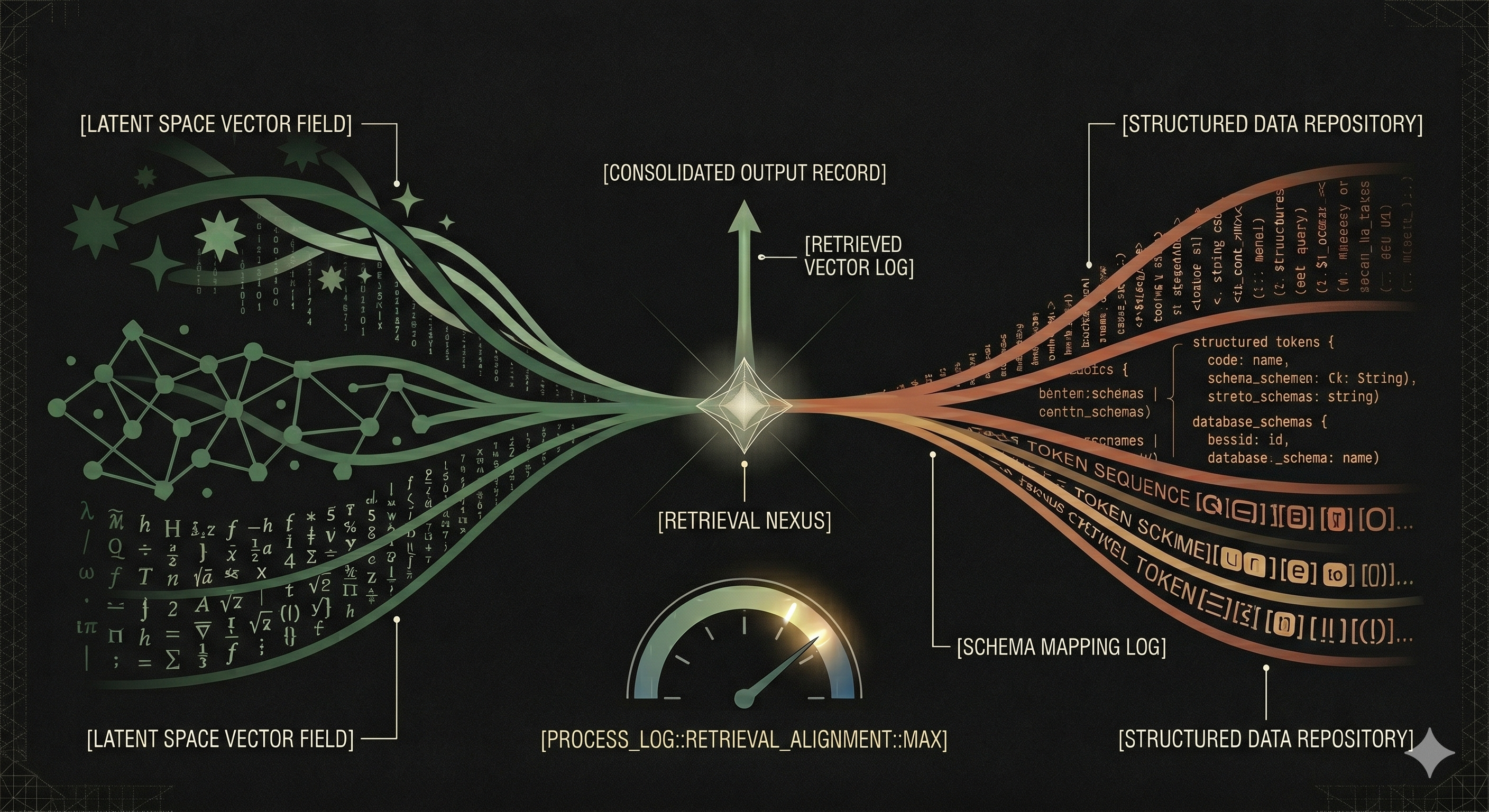

Two Arms for Retrieval

Hypabase Memory implements dual-arm retrieval:

Structural arm (hippocampal analog): Traverse the hypergraph from query-relevant entities. Find memories through graph connections.

Semantic arm (neocortical analog): Embed the query and find similar memory embeddings. Find memories through pattern similarity.

Fusion: Combine rankings from both arms.

Neither arm alone is sufficient. Together, they cover each other's blind spots.

The Structural Arm: Query-Biased PPR

The structural arm uses Query-Biased Personalized PageRank (QB-PPR), adapted from Chitra and Raphael's work on hypergraph random walks.

The Intuition

Imagine a random walker on the hypergraph. At each step:

- The walker is at a node

- It picks a hyperedge containing that node (weighted by relevance to the query)

- It picks another node in that hyperedge (weighted by specificity)

- It moves to that node

After many steps, where does the walker spend most of its time? That's PageRank.

"Query-biased" means the walker is teleported back to query-relevant nodes with some probability. "Personalized" means those teleportation targets are specific to this query, not uniform.

The Problem: Star Topology

Personal memory graphs have a characteristic structure: a central "user" node connected to nearly everything. The user ate breakfast, the user prefers Python, the user met with clients.

Standard PageRank assigns most mass to hubs. The "user" node would dominate every query. That's useless — every memory involves the user.

We need three corrections:

Correction 1: Inverse-Degree Vertex Weights

Within each hyperedge, weight nodes by the inverse of their global degree:

γ_e(v) = (1/degree(v)) / Σ(1/degree(u)) for u in edge e

A node with degree 150 (like "user") gets weight proportional to 1/150.

A node with degree 2 (like "hybrid bike") gets weight proportional to 1/2.

The random walker preferentially moves to specific entities. Hubs are suppressed.

Correction 2: Non-linear Edge Weight Amplification

Edges are weighted by cosine similarity to the query, raised to a power:

ω_q(e) = cosine(e, query)^β where β = 4

Without amplification, a 1.3:1 ratio in similarity (e.g., 0.65 vs 0.50) produces weak bias. The walker wanders too randomly.

With β=4, that ratio becomes ~3:1 in effective weight. The walker strongly prefers edges relevant to the query.

Correction 3: High Teleportation Rate

We use α=0.5 — the walker teleports back to seed nodes 50% of the time.

On small graphs (50-200 edges typical of personal memory), lower teleportation causes convergence to the stationary distribution, washing out query-specific signal. High teleportation keeps mass concentrated near seeds.

Seed Selection

Seeds are nodes relevant to the query. We find them by:

- Embedding individual query words and matching to node embeddings (threshold 0.65)

- Embedding the full query and matching to nodes (threshold 0.5, limit 8)

- Union of all matches

For "Alice's bike expenses", seeds might be: Alice, bike, expenses.

Edge Scoring

After the random walk converges, each hyperedge is scored by the PageRank mass of its constituent nodes, weighted by specificity:

score(e) = Σ PageRank(v) × γ_e(v) for v in e

Edges containing high-PageRank, specific nodes rank highest.

The Semantic Arm: Embedding KNN

The semantic arm is straightforward:

- Embed the query

- Find the k nearest memory embeddings (cosine similarity)

- Return edges above a similarity threshold

We use sqlite-vec for KNN search — a virtual table in SQLite that provides vector similarity search without external dependencies.

Parameters:

- k = 150 (over-fetch for filtering)

- min_score = 0.3 (permissive threshold)

The semantic arm finds memories that "sound like" the query, regardless of graph structure.

Fusion: Reciprocal Rank Fusion

We have two rankings — one from structure, one from semantics. How do we combine them?

Not by score averaging. The scores aren't calibrated. A PageRank score of 0.02 and a cosine similarity of 0.7 aren't comparable.

Not by score normalization. Normalizing to [0,1] loses information about absolute quality. A uniform ranking normalized to [0,1] looks the same as a skewed ranking.

Reciprocal Rank Fusion (RRF) combines rankings without requiring score calibration:

RRF(e) = 1/(k + rank_PPR(e)) + 1/(k + rank_cos(e))

Where k is a constant (typically 60).

If an edge ranks #1 in both arms: RRF = 1/61 + 1/61 = 0.033

If an edge ranks #1 in one arm and #100 in the other: RRF = 1/61 + 1/160 = 0.023

If an edge ranks #50 in both arms: RRF = 1/110 + 1/110 = 0.018

Edges that rank well in both arms dominate. Edges that rank well in one arm still contribute. The fusion is robust to calibration differences.

Why Both Arms Matter

Semantic alone misses structural connections:

Query: "What do I know about Alice?"

- Semantic finds: memories mentioning "Alice" explicitly

- Misses: memories about "Python" that are connected to Alice through a preference edge

The structural arm traverses from the Alice node to her connected edges, finding Python-related memories even if "Alice" doesn't appear in the text.

Structural alone misses thematic similarity:

Query: "How should I handle deployment issues?"

- Structural finds: memories directly connected to "deployment" nodes

- Misses: memories about "CI/CD" or "production incidents" that use different terminology

The semantic arm finds thematically similar content through embedding proximity.

Together, they cover both:

The bike expenses example:

- Structural: Alice → bike → maintenance costs AND Alice → bike → road trip

- Semantic: "expenses" matches "maintenance costs" but not "road trip"

- Fused: maintenance costs ranks very high (both arms); road trip ranks moderately (structural only)

Both memories surface. Structural contributes what semantic misses.

The ACT-R Connection

ACT-R's memory model includes spreading activation: activation flows from cues to associated memories, weighted by association strength.

QB-PPR is a graph-theoretic analog. Query-relevant nodes (cues) spread activation through hyperedges to connected nodes. The inverse-degree weighting is analogous to ACT-R's fan effect — specific cues provide more activation than general cues.

The kāraka role weights (subject=1.0, object=0.9, locus=0.4) further modulate spreading activation. A subject match contributes more than a locus match, just as ACT-R weights cue-memory associations by type.

Benchmark Results

On LongMemEval (500 questions testing long-term conversational memory):

| Retrieval Method | Accuracy |

|---|

| CLS interleave (limit=30) | 86.4% |

| QB-PPR + RRF (limit=50) | 87.4% |

The +1.0 percentage point comes primarily from:

- Temporal reasoning: +2.3% (graph connections surface time-ordered events)

- Multi-session: +1.8% (entity connections surface related sessions)

Session recall@10 is 96.4% — nearly all relevant sessions appear in the top 10 results.

The structural arm's contribution is modest but consistent. It surfaces memories that semantic search alone ranks too low.

Implementation Notes

Over-fetching for filtering: We retrieve more candidates than needed (150 semantic, 100 PPR paths) because downstream filters (memory type, mood, temporal range) may discard many. Better to have candidates to filter than to miss relevant memories.

Access recording: Every recall operation records access to the retrieved memories, updating the access_count used in memory strength. Frequently retrieved memories get stronger; rarely retrieved memories fade.

Threshold tuning: The similarity thresholds (0.65 for seed nodes, 0.3 for edge KNN) balance precision and recall. Lower thresholds surface more candidates; higher thresholds surface only strong matches. We err toward recall — let the fusion and strength scoring handle ranking.

The Full Retrieval Pipeline

Query: "What are Alice's bike-related expenses?"

1. SEED SELECTION

- Embed "Alice" → match node "Alice"

- Embed "bike" → match node "hybrid bike"

- Embed "expenses" → match node "budget" (semantic proximity)

Seeds: {Alice, hybrid bike, budget}

2. STRUCTURAL ARM (QB-PPR)

- Initialize PageRank mass at seeds

- Random walk with teleportation (α=0.5)

- Iterate 5 times

- Score edges by constituent node mass

Top edges: maintenance_memory, road_trip_memory, budget_discussion

3. SEMANTIC ARM (KNN)

- Embed full query

- KNN search on edge embeddings

Top edges: maintenance_memory, general_budgeting, shopping_trip

4. FUSION (RRF)

- maintenance_memory: rank 1 in both → RRF = 0.033

- road_trip_memory: rank 2 structural, rank 47 semantic → RRF = 0.025

- budget_discussion: rank 3 structural, rank 8 semantic → RRF = 0.030

Fused ranking: maintenance, budget_discussion, road_trip...

5. STRENGTH FILTERING

- Compute memory_strength for each candidate

- Filter by min_strength threshold

- Sort by RRF × strength

6. RETURN top-k memories

Both bike memories surface. The maintenance memory ranks highest (strong in both arms). The road trip memory ranks lower but still appears (structural arm contribution).

Series Conclusion

We started with a thesis: agent memory is primarily a representation problem.

The structure in which you store knowledge determines the ceiling of what retrieval can achieve. Better embeddings, smarter reranking, and fancier algorithms operate below that ceiling. They can't recover what the representation discards.

Hypergraphs preserve the natural n-ary structure of facts. Kāraka roles label how participants relate to actions. PENMAN notation extracts this structure from natural language. Memory types classify what kind of knowledge we're storing. Memory strength models how knowledge fades and persists. Dual-arm retrieval exploits both structure and semantics.

Each layer builds on the one before. The hypergraph enables role labeling. Roles enable precise extraction. Extraction enables typed storage. Types enable appropriate decay. Structure enables graph-based retrieval.

The result is a memory system where the representation preserves what matters, and retrieval can find it.

Hypabase Memory: Dual-Arm Retrieval Built In

Hypabase Memory implements dual-arm retrieval in the recall() API. The structural arm traverses the hypergraph via QB-PPR; the semantic arm searches edge embeddings via sqlite-vec. RRF fusion combines rankings automatically — surfacing memories that are structurally connected, semantically similar, or both.

Previous: The Math Behind What Your Agent Remembers or Forgets

This concludes the Hypabase Memory Series.