Lossless Claw: How DAG-Based Compression Stops AI Agents From Forgetting

How Martian Engineering's DAG-based compression replaces truncation with hierarchical summaries. Why lossless session memory changes everything for long-running AI agents.

How Martian Engineering's DAG-based compression replaces truncation with hierarchical summaries. Why lossless session memory changes everything for long-running AI agents.

Stay updated

Get notified when we publish new research on AI memory and agents.

AI agents forget.

Not slowly, like humans. Catastrophically. When a conversation exceeds the context window, the agent truncates older messages. Decisions made early in a session vanish. Instructions you gave an hour ago disappear. The agent becomes a different agent — one that doesn't remember what you agreed on.

Lossless Claw, built by Martian Engineering and based on Voltropy's LCM paper, takes a different approach: nothing gets deleted. Ever.

It feels like talking to an agent that never forgets. Because it doesn't.

Standard context management is brutal. When you hit the limit, old messages get cut. The sliding window slides, and history falls off the edge.

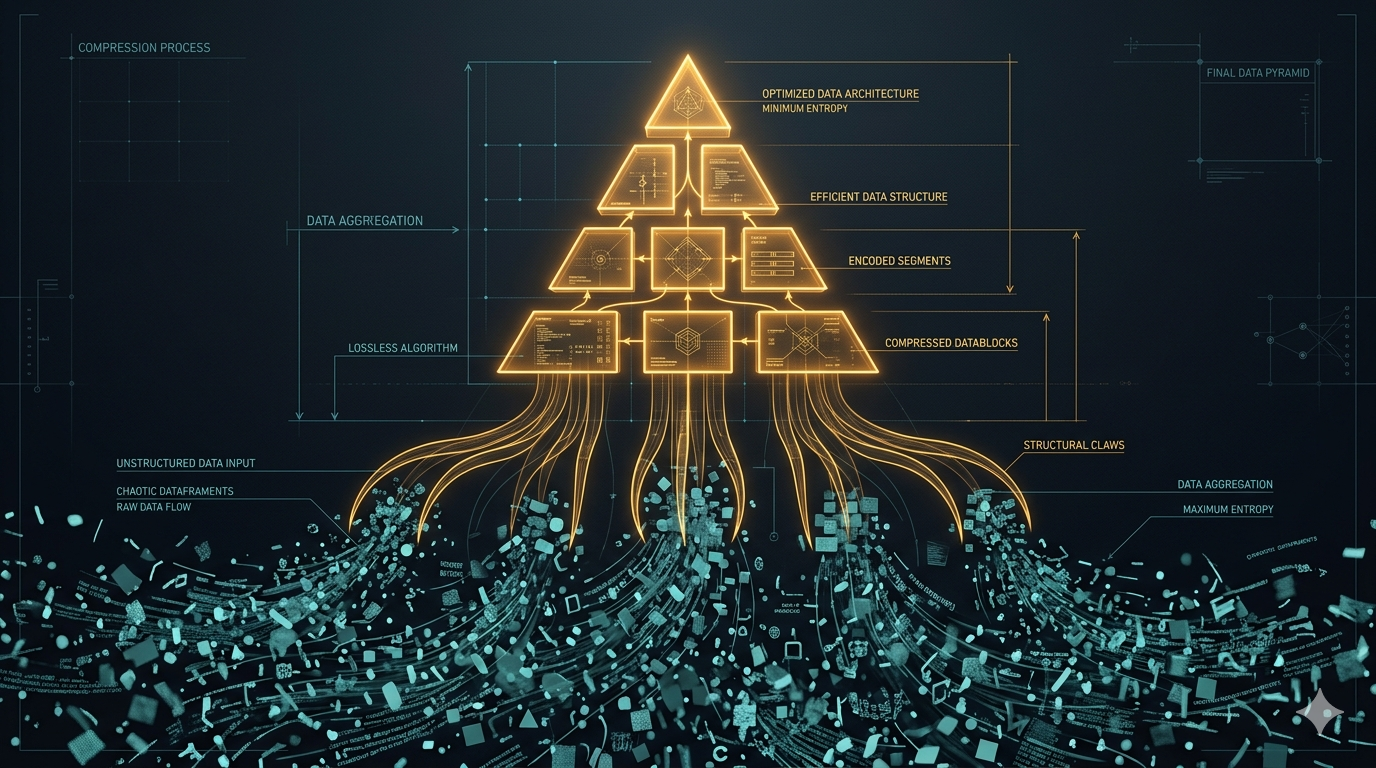

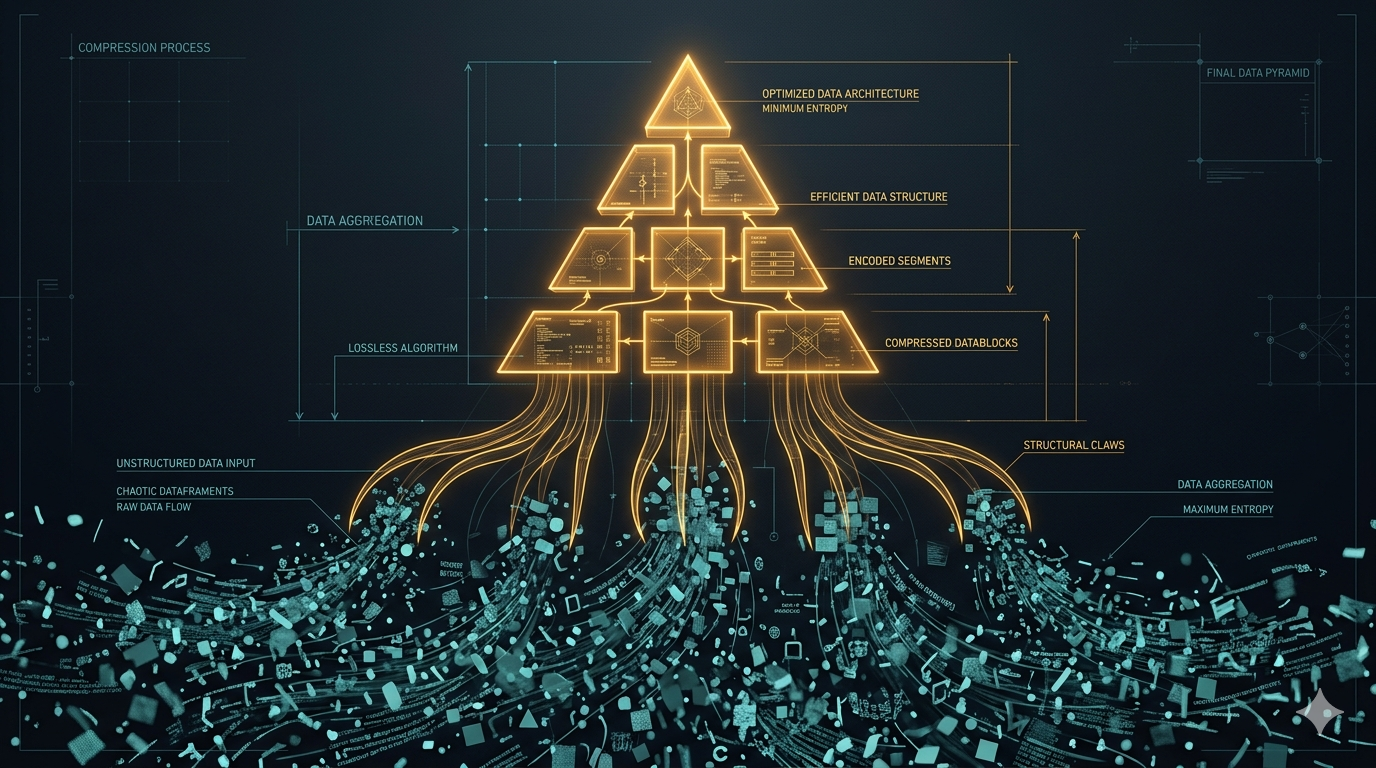

Lossless Claw replaces truncation with a DAG (directed acyclic graph) of summaries:

The raw messages never disappear. Summaries link back to their sources. The agent can always dig into details when it needs them.

Imagine a 4-hour debugging session. Traditional agents forget the first 3 hours. Lossless Claw builds a tree:

[Root summary: "Debugging auth timeout in production. Identified three potential causes..."]

├── [Summary: "Hour 1-2: Reproduced issue, ruled out network latency..."]

│ ├── [Raw messages 1-50]

│ └── [Raw messages 51-100]

├── [Summary: "Hour 2-3: Found Redis connection pool exhaustion..."]

│ ├── [Raw messages 101-150]

│ └── [Raw messages 151-200]

└── [Raw messages 201-250 (recent, kept verbatim)]

The agent's context includes the root summary, relevant branch summaries, and recent raw messages. Full detail where it matters. Compressed context everywhere else.

When the agent needs specifics from hour 1? It calls lcm_expand and drills into those nodes.

Lossless Claw gives agents tools to navigate compressed history:

lcm_grep — Search through all messages, including compressed ones. Find that discussion about rate limits from three hours ago.

lcm_describe — Get an overview of what's in the compressed history. "What did we discuss in the first half of this session?"

lcm_expand — Drill into a specific summary node. Pull the raw messages back into context when details matter.

The agent doesn't need to hold everything in context. It needs to know where to look and how to retrieve.

npm install lossless-claw

Key settings in your config:

{

"freshTailCount": 64,

"leafChunkTokens": 80000,

"contextThreshold": 0.75,

"incrementalMaxDepth": 1

}

freshTailCount — How many recent messages stay raw (not summarized). Default 64.

leafChunkTokens — Token threshold before a chunk gets summarized. Default 80,000.

contextThreshold — Fraction of context window that triggers compaction. At 0.75, compaction starts when you hit 75% capacity.

incrementalMaxDepth — How many levels of summarization happen per turn. Keep it at 1 to avoid latency spikes.

Lossless Claw uses your configured LLM for summarization. You can point it at a cheaper model (like Haiku) to save costs on compression work.

The problem with flat summarization: you lose structure. A single summary of 4 hours of work is too compressed to be useful.

The problem with no summarization: you hit context limits and lose everything.

DAG-based summarization threads the needle:

This is how human memory works. You don't remember every word from last week. But you remember the gist, and you can reconstruct details when you focus.

True persistence. Not "persist until context fills up." Actual persistence. Every message, forever.

Structured compression. Summaries maintain hierarchy. You can navigate the history like a tree, not search through a flat log.

Agent-accessible. The agent has tools to search and expand. It's not blind to its own history — it can choose what to remember in detail.

Provider-agnostic. Works with any LLM provider. Use Opus for your main work, Haiku for summarization.

Lossless Claw is session memory. Excellent session memory. But it has boundaries.

Cross-session continuity. Each conversation is its own DAG. Knowledge from yesterday's session doesn't automatically flow into today's.

Semantic deduplication. If you discussed the same topic in hour 1 and hour 3, you get two summaries. No automatic consolidation.

Decay. History accumulates forever. The SQLite database grows. There's no mechanism for graceful forgetting.

Structured knowledge. Summaries are natural language. Facts aren't extracted into queryable structures.

These aren't failures — they're scope boundaries. Lossless Claw does session memory better than anything else. Cross-session knowledge requires different architecture.

Lossless Claw proves a principle: compression beats truncation.

Throwing away history is the wrong default. The information existed. The agent saw it. Deleting it makes the agent dumber than it was 2 hours ago.

But compression without structure creates different problems. You need hierarchy. You need navigation tools. You need the ability to expand when details matter.

The six problems any serious memory system must address:

Lossless Claw handles temporal ordering well and provides strong source tracing. It proves that never-forgetting session memory is achievable. What it doesn't attempt is cross-session knowledge accumulation or semantic structuring.

We're building Hypabase to go further — memory that consolidates across sessions, structures knowledge semantically, and decays gracefully so signal doesn't drown in noise.

Summarize, don't truncate. Losing history makes agents worse. Compression preserves it.

Hierarchy matters. Flat summaries lose structure. DAG-based compression maintains navigable history.

Agents need tools. Perfect memory isn't enough. Agents need ways to search, describe, and expand their own history.

Session memory is solvable. Lossless Claw proves it. The hard problem is cross-session accumulation.

Verification is built-in. Every summary traces to raw messages. Provenance comes free with the architecture.

Lossless Claw represents a fresh approach to session memory that's gaining traction in the OpenClaw community. For long-running agentic work — multi-hour debugging, extended research, complex planning — it's the difference between an agent that forgets and one that doesn't.

Try Hypabase Memory — agentic memory that compounds across sessions.

Related: Karpathy's LLM Wiki: Why the Future of AI Memory Isn't RAG | Tobi Lütke's QMD: Why Shopify's CEO Built His Own AI Memory System